It’s no secret that getting data right is a constant battle that needs to be consistently refined, reworked, and analyzed. This is due to the fact that even the most comprehensive datasets lose their accuracy as they age. This is because the world’s POIs change on a daily basis, and so too does the accuracy of our data if left unrefreshed. Unfortunately, most people working in the geospatial industry are very familiar with this issue. It’s the reason why flat file customers receive updated data according to set cadences, and our API customers receive calls from a dataset that is updated in real-time. In the case of POI and human movement, old data is bad data.

Data isn’t a fine red wine, and the idea that “old” really does mean “bad” doesn’t only apply to data, but also to the machine learning (ML) models that predominantly source and build the datasets. Your data should be looked at as a chain, where the old proverb of “only being as strong as the weakest link” rings true. If there is a fundamental flaw in the process from which the data is generated, then the data, and any consequent usage of it, will reflect those defects.

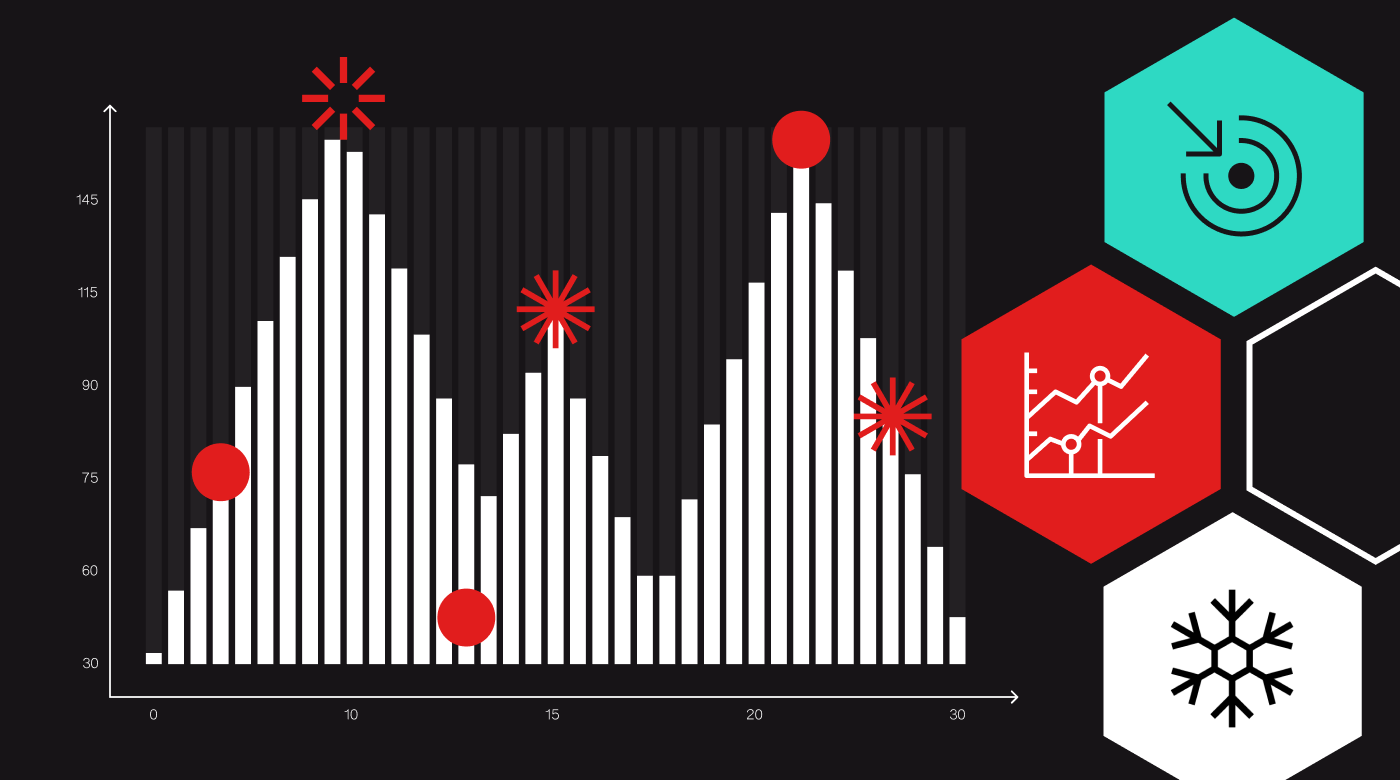

As a result, we at Foursquare have made a point to consistently and frequently analyze our data, the models that help curate them, and the methods that go into aggregating over 120M+ POI worldwide. Each month, countless hours of work go into reviewing and evaluating the process across our various sources of data, such as ground truth datasets, authoritative third-party sources, and our own first-party data. Because of this due diligence, we occasionally find ways to improve our dataset, whether it be POI updates that we communicate on a monthly basis or in this month’s example, innovating one of the machine learning models that is crucial in curating this data.

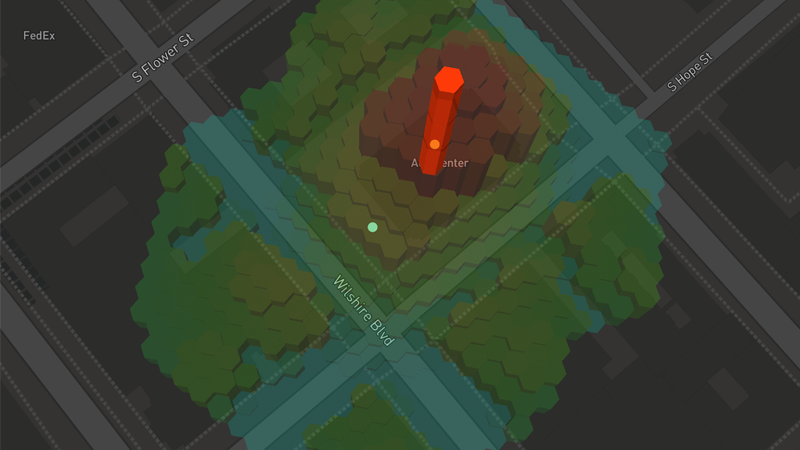

For those who are unfamiliar, Geosummarizer is the model that generates a final Lat/ Long for a POI based on an analysis of geocodes from various inputs within its cluster. Examples of the inputs are our very own user-generated content, third party crawls, and many others. This matters because Geocodes represent the final, integrated view of where a place is located in the real-world.

For use cases such as mapping or delivery, an accurate geocode is absolutely essential as it’s a component in the algorithm that generates a “dropoff” point. A “dropoff” geocode is defined as the point in the center of a street orthogonally in front of the POI’s rooftop. These geocodes are targeted at use cases like rideshare, mapping, delivery or the tech that helps a driver find where to drop off a passenger. Accurate geocodes can make all the difference in achieving a successful outcome for the use case above.

Ultimately, the improved Geosummarizer model increased the accuracy of these geocodes by reducing the median distance between them in our dataset by 20%.

Moving forward, we would like to share more insights into the innovative improvements taking place behind the scenes here at Foursquare. To learn more about the latest data improvements and Geosummarizer, watch this webinar where Foursquare’s leading data team will cover this process in-depth.

If you’re interested in working with Foursquare, download our sample data here or connect with our team and start your geospatial journey.